Published on

By Sarah Kiefer

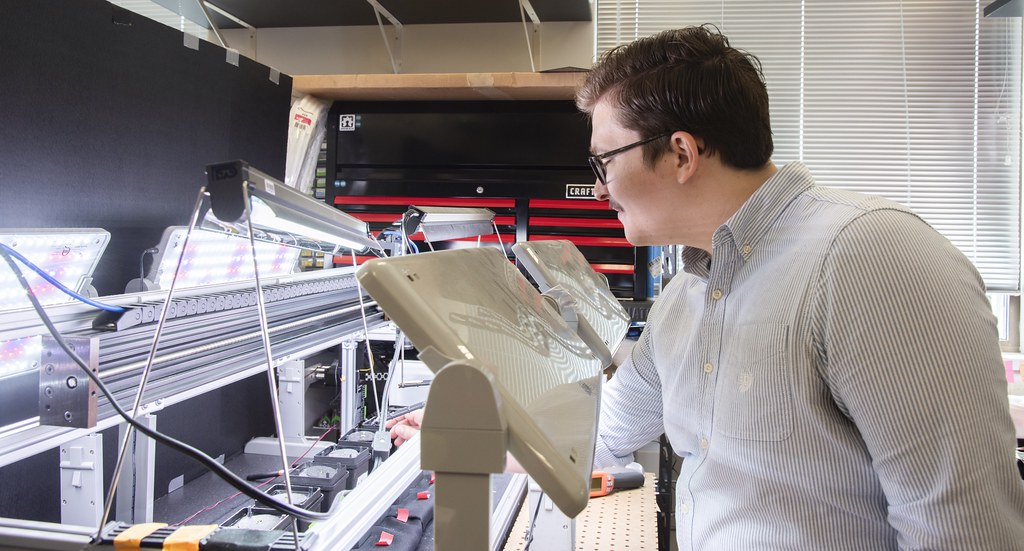

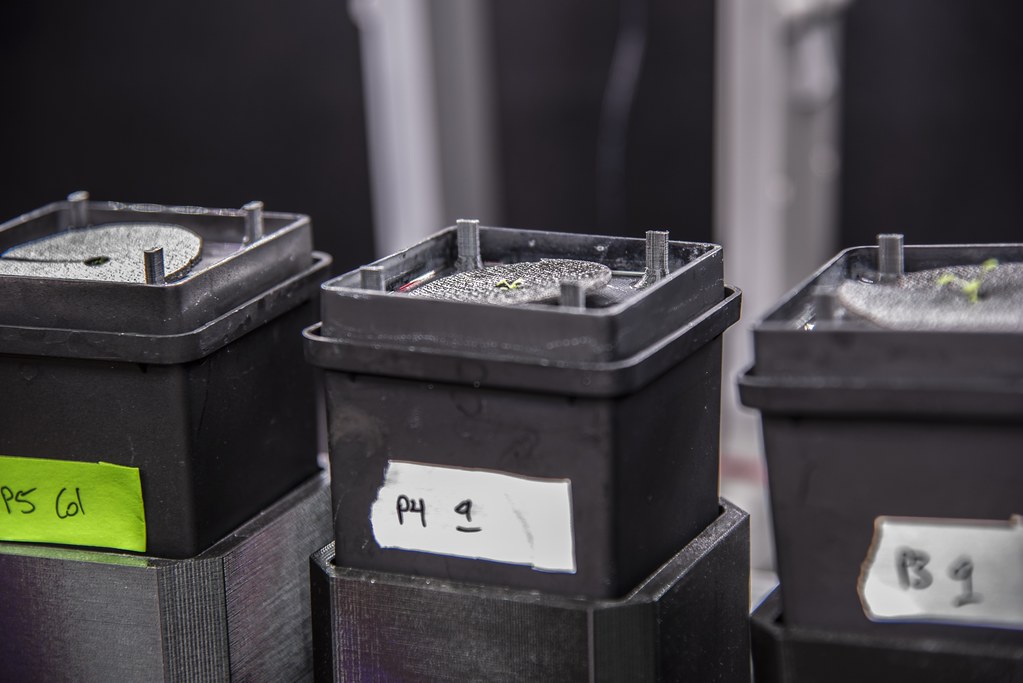

The LED lights danced as the OPEN leaf system powered up. Quickly, the robot zips down the track to its preplanned destination, hovering above each plant sitting atop a 3D-printed mechanism, then the camera snaps a shot as it conducts this same routine every 30 minutes.

This open source, data driven tool is one way scientists like David Mendoza-Cózatl lab at Bond LSC and work to simplify the tedious data collection required in plant research.

The OPEN leaf system — described in The Plant Journal in September 2023 — uses modern technology to do its job better and gather results faster than other machines of the same kind. But this National Science Foundation funded project is more than just a robot taking a picture of a plant.

“Phenotypes are a change in a plant that you can visualize. Our goal is to characterize phenotypes through time,” said Mendoza-Cózatl, a Bond LSC researcher, director of graduate studies and associate professor of Plant Science and Technology at the University of Missouri. “In my mind, time is one of the black boxes that we have not been able to solve because it is variable.”

Color plays a big role in measuring change in a plant. Using color quantization techniques that simplify colors into numbers, researchers can identify four basic colors to analyze. The machine captures RGB (red, green, blue) images using a camera and converts it into a LAB color space to do the heavy lifting. L stands for lightness, whereas A and B represent the green to magenta and blue to yellow color spectrums detected in something like a leaf of the plant.

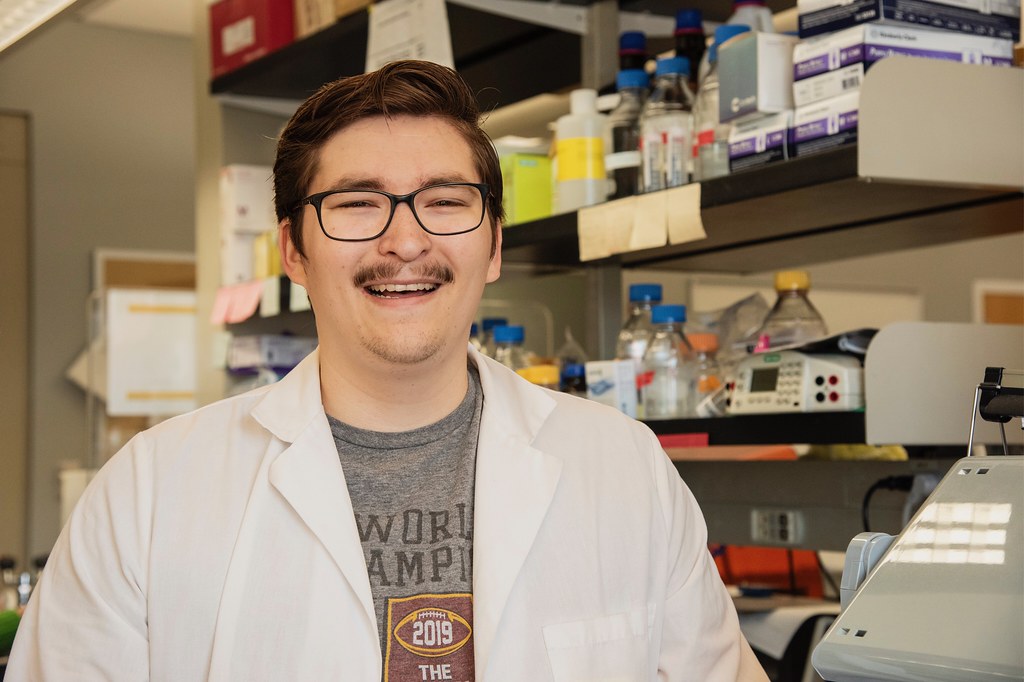

“Color is a subjective thing to a lot of people and it’s a lot easier to look at a number and say this number is more than this number, but saying this color is more yellow than this one is a harder thing to recognize,” said Landon Swartz, the Mizzou computer science graduate research assistant who engineered the system. “Our process correlates the human perception of color which is actually very different from the way a computer sees color.”

When the colors are quantified, then the real work begins — plotting the points.

This procedure morphs a primarily visual measurement into a mathematical one. Just like with numerical data, Swartz can take colors and observe how they change over time by using coordinates from his simplified combinations.

“It’s a really dynamic process and something that’s not thought about a lot, but because no one’s ever done it before, it’s very hard to convince people it’s accurate and try to visualize or explain the process to others,” said.

Mendoza-Cózatl said this way of quantifying and recording takes the system to the next level.

“We thought this idea of describing color through time was solved a long time ago. Turns out, it was not,” Mendoza-Cózatl said. “Extracting the color of a plant has been done, but representing the color to viewers over time is something that has not been done.”

The team differentiated their system from others by considering a hybrid work environment to allow many collaborators through the Slack communication app. The app can be used to chat directly with the robot itself.

“We are trying to advance the field of phenotyping as a group,” Mendoza- Cózatl said. “It’s not just my machine, it can be your machine or anyone’s machine.”

Slack was first suggested by Drew Dahlquist, a former undergraduate in the Mendoza-Cózatl lab, during the COVID-19 pandemic when research labs needed to cut out the middleman on projects that require collaborators from afar.

“Let’s say I’m sitting in my basement, the experiment is running and I want to know how it’s going. I can message the machine ‘hey, can you send me the last couple of pictures that you took’ and the machine will send them,” Mendoza-Cózatl said. “The element that sets this system apart is you can then share it with colleagues, students, or anyone.”

Swartz and his collaborators worked to incorporate the details of the plant, not just the entire rosette — the circular arrangement of leaves on the plant. Their system recognizes each individual leaf without bias.

But how can observation of a leaf be biased in the first place?

Traditionally, these systems prompt the user to draw a line around each leaf for it to be recognized, which is a subjective observation based on how each person draws their boundaries.

The OPEN leaf system’s computer algorithm automatically recognizes each leaf to be analyzed separately.

“To me it’s really exciting because I was trained as a biochemist then I decided to work with plants. I never thought I would be publishing papers about computer vision and phenotyping,” Mendoza-Cozatl said. “It gives a fantastic example that science is really open. We saw a need, so we tackled the problem and came up with a solution that is very rewarding.”

Their new machine also makes the database more accessible and cheaper than other similar machines. Costing less than $3,000 to build, the blueprints and instructions to build the OPEN leaf system are accessible to anyone who wants to put it together.

“This type of project shows that you can do a lot with a little because you don’t need to pay thousands of dollars to get the same amount of insight into your research,” Swartz said. “This project has become a tool that we are going to use for papers to come, and we are hoping that other people will start to use it for their papers as well.”

The collaboration between engineers and biologists helped them address the evolving nature and nuanced challenges of the project.

“The most interesting part about this project is that there is a novelty to working in an interdisciplinary manner like this,” Swartz said. “Usually, how it works is someone comes to you with a problem and you present a solution. But here we are coming up with solutions to find the problems, which I think is a very fruitful approach.”

Swartz is already working on his next big project — an OPEN root system. This machine will accomplish similar goals but apply to the root system of the plants instead of the leaves.

The paper titled, “OPEN leaf: an open-source cloud-based phenotyping system for tracking dynamic changes at leaf-specific resolution in Arabidopsis” by Landon Swartz, Suxing Liu, Drew Dahlquist, Skyler T. Kramer, Emily S. Walter, Samuel A. McInturf, Alexander Bucksch, and David G. Mendoza-Cózatl was first published in The Plant Journal on September 21, 2023.